Is GPT a Computational Model of Emotion?

Date:

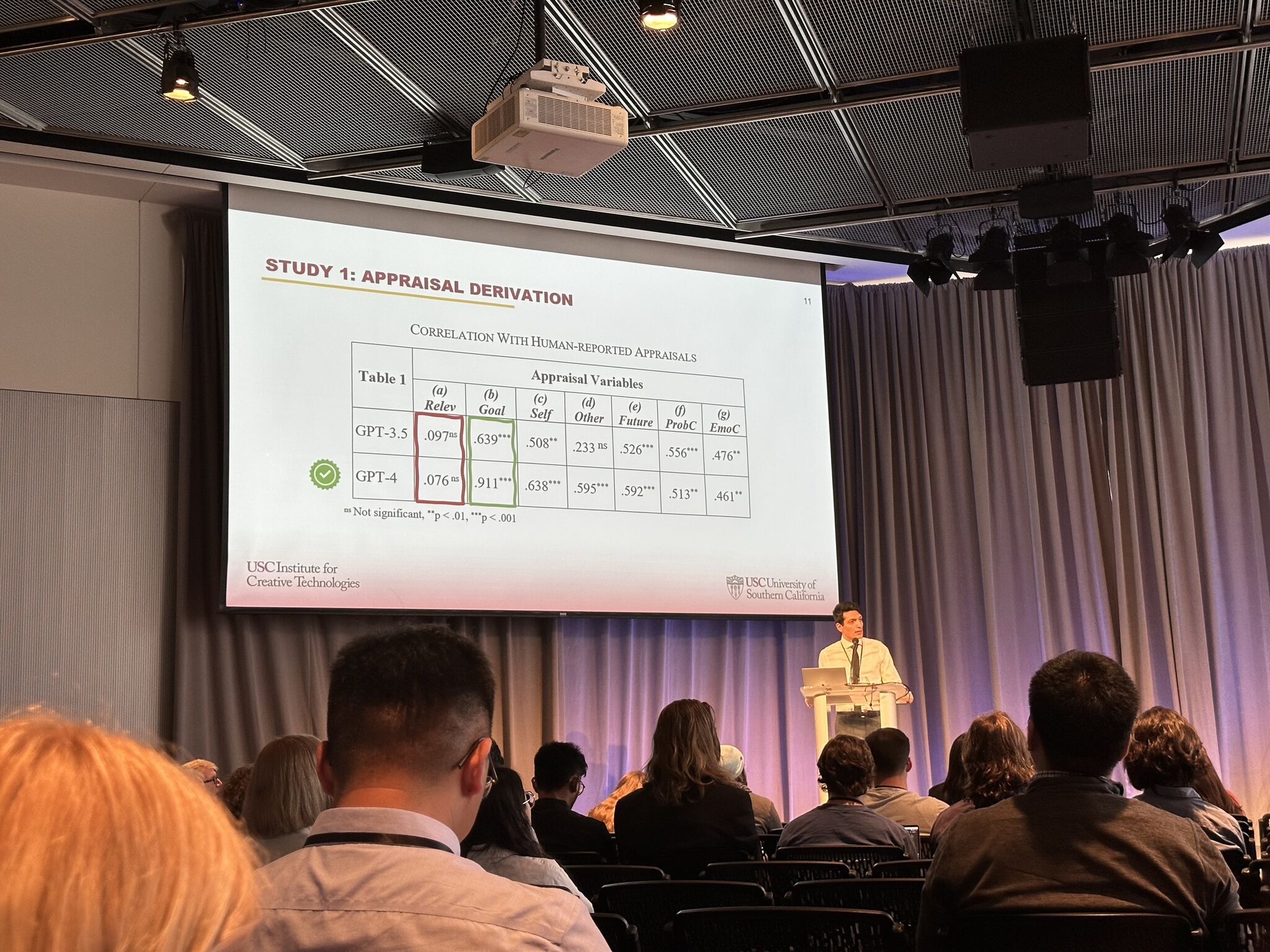

This paper investigates the emotional reasoning abilities of the GPT family of large language models. We advocate a component perspective on evaluation that decomposes models into different aspects of emotional reasoning (appraisal derivation, affect/intensity derivation, and consequent derivation). We report two studies. A correlational study examines how the model reasons about autobiographical memories. An experimental study systematically varies aspects of situations in ways previously shown to impact emotion intensity and coping tendencies. Results demonstrate, even without prompt engineering, GPT predictions closely match human-provided appraisals and emotion labels, though GPT struggled to predict emotion intensity and coping responses. GPT-4 performed best on the first study but performed poorly on the second (though it yielded the best results following minor prompt engineering). The evaluation raises questions about how to utilize the strengths and mitigate the weaknesses of such models, including dealing with variability in responses. More fundamentally, these studies highlight the benefits of the componential perspective on model evaluation.